Quality assurance lessens core, log data uncertainties

Quality-assurance procedures can lessen the uncertainties inherent in core and log data. Elimination of these uncertainties will help optimize the development of oil and gas fields.1

Uncertainty means that a variety of outcomes may occur. The final result may be greater or less than expected. In the petroleum industry, deviations from expectation may have serious financial consequences.

Despite its importance, considerable confusion exists as to the best practice for calculating uncertainty. Making a decision on uncertain data alone is insufficient. The decision requires estimating all parameters contributing to the uncertainty and then adding them together in a way that displays the confidence level.

Quality assurance

In the last few years, quality control has increased its importance because of several factors.

First, competition has increased and, as a result, customers have more choices. Second, more and more companies have implemented quality-improvement programs that involve suppliers. The result from both these factors is that customers are more likely to define and measure the quality of products and services they receive.2

A concise definition is that quality is meeting specified requirements as agreed upon with the customer. Two important words in the definition are “specified” and “customer.” Without specified requirements, one cannot measure quality. Also without defining the target or customer of the product or service, one cannot define the associated requirements or measure the conformance to those requirements.

Three important terms to define are:

- Quality control.

- Quality assurance.

- Total quality management.

With well cores and logs, quality control is an unpopular activity of checking the conformance of a given product to previously defined specifications. This quality control is undertaken after the fact and cannot improve a product or service; however, it can improve future logging efforts.

Quality assurance is a systematic approach to ensuring that a given process is adhered to consistently. Quality-assurance systems provide customers with confidence in the products and services they purchase. ISO-9000 is one such international system.

Total quality management is a business philosophy that seeks to release the organization’s potential to identify and eliminate customer problems, enhance business processes, and increase customer satisfaction. Total quality management involves active participation of all personnel including subcontractors and is critical to the success of any business.3

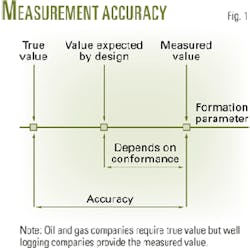

Logging companies provide a concrete and specific product, information, or data. A log is a product that needs quality attributes. The product provides measurements that differ from the true value of the formation. This difference is called accuracy (Fig. 1).

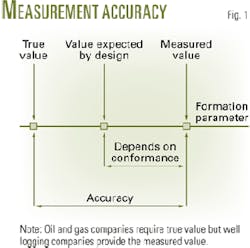

Logs cannot match completely the user’s requirements at the design stage. In addition, the product delivered in the field does not fit strictly with the one designed in research and engineering centers (Fig. 2).

null

Core data

The key factors in determining core data quality are similar to those for any other product and include effective assessment of needs, effective communication, effective planning, and effective delivery of a “no-hassle” product.

The analysis must clearly define strategies for taking core plugs. Taking plugs on a rigid statistical basis every foot to avoid selection bias yields measurements on long sections of nonreservoir rock and measurements on heterogeneous plugs having mixed lithologies and crossbedding.

Cores provide an understanding of the reservoir rock and can calibrate the porosity derived from logs such as density. One question is why spend money to measure nonreservoir rock? It is much better to cut good quality plugs in potential reservoir rock. The average properties are then correct for the net column but not for the gross column.

The choice of a core analysis contractor is important because different laboratories will obtain different answers for the same type of measurement on the same rock.

Steps taken by the industry to standardize the results range from recommended practices to test plugs and more recently to the adoption and accreditation of quality management.

A useful alternative to these steps would be a more widespread use of laboratory check plugs. These provide a representative quality statement about the laboratory.

A wide variety of related but different experiments derive the required core parameters. Each test has both positive and negative attributes. Effective communication of these attributes is essential for making informed choices.

Before an analysis, a major factor in acquiring good core data is a critical assessment of how representative are the samples. For example, X-ray visualization techniques can ensure that plugs are cut in the correct orientation and are free of unrepresentative features or misoriented permeability barriers that can affect flow out of proportion to their influence in the reservoir.

The assessment of X-ray images of cores enables the nondestructive evaluation of intervals for subsequent core analysis measurement. The technique allows for the identification and avoidance of man-made artifacts.

Information on representative features enables informed sampling for core analysis.

Core quality assurance

Cores often provide the only direct measurements of reservoir properties. The measurements indicate hydrocarbon presence and distribution, storage capacity (porosity), and flow capacity (permeability), such as:

- Porosity, permeability, residual fluids, and lithology.

- Areal changes in porosity, permeability, and lithology required to characterize the reservoir.

- Irreducible water saturation or residual oil saturation.

- Unaltered wettability or saturation state.

- Directional permeability.

- Calibration or improved interpretation of logs.

- Depositional environments.

The industry often undervalues core information because of the perception that the quality of the data is limited. Many end users of core-analysis data have examples where the laboratory has not met the quality requirements. Typical problems that result in unrepresentative data are:

- Unsuitable preservation and sampling procedures.

- Sampling bias.

- Out-of-calibration test equipment.

- Not following standard procedures or nonexistant standard procedures.

- Insufficient training of laboratory personnel.

- Not communicated or understood end-user requirements.

Some reasons for these problems include:

- Reliance on quality control instead of quality assurance.

- Lack of or availability of industry standards.

- Poor communication between the customer and laboratory in understanding each other’s requirements.

Organizations such as the Society of Core Analysts, a chapter at large of the Society of Professional Well Log Analysts (SPWLA), and the American Petroleum Institute (API) provide recommended practices that play an important role in opening up communications within the industry, improve the understanding of the role of core analysis, and generally contribute to better core analysis.

The efforts of these groups should continue, but at best they only can provide guidelines and recommendations.

Quality-assurance programs in the form of ISO 9000 and BS5750 can also improve data quality by ensuring improvements in the operation of laboratories and the procedures used.

Quality assurance, control

A common method for checking core-analysis data has been for companies to send sets of check samples to various laboratories. The laboratory runs the test and returns the data for comparison with the “correct” results.

The value of this quality-control check is limited. Apart from perhaps a laboratory taking extra care to ensure good data, the client does not have a guarantee that the samples run on different equipment or by a different operator would provide the same results.

An additional problem arises if the test conditions are not specified. For example, a gas-permeability test can be taken at different confining pressures with varying mean pressure and the results will vary accordingly. All the results will be correct for the given test conditions.

A quality-assurance system ensures that laboratories run data on maintained and calibrated equipment, according to documented procedures with fully trained personnel. This system gives the customer greater confidence that the data are correct every time and that they clearly define the test conditions.4 5

Fit-for-purpose data

Because data requirements change during the life of a field, they require fit-for-purpose data. This involves the determination of the quality required for the type of data, its accuracy and precision requirements, and the quantity required at different stages of field life

Because every field is different, fit-for-purpose data requirements vary from field to field and even from reservoir to reservoir within a field. Fit-for-purpose data during a field’s life cannot be put into a simple checklist of log, core, and pressure, and flow tests on specific wells on specific dates.

Adequate reservoir characterization requires representative reservoir data. A difficulty arises when it is unclear whether a problem is a data-related issue or whether the interpretation is incomplete.

Sensitivity analyses can quantify the impact of potential errors. A measurement of whether a valid interpretation of good data has been made is the comparison of predicted vs. actual production and pressure responses. When they match, it is reasonable to conclude that the analysis has provided a valid reservoir description. Thus, the analysis does not require any more of this type of data.6

Aspects that frequently require attention for a successful development include data coverage (x, y, z) and quantity; dynamic and static data; data quantity (accuracy and precision); and integration and reconciliation of different measurement scales.7

The accompanying box lists the details.

Log interpretation uncertainties

In addition to the most likely value of hydrocarbons in place, the reservoir engineer needs to know the uncertainty of this value.

Two methods for quantifying uncertainties propagated from log data are: the Monte Carlo method, which requires a few lines of programming but has a long computation time, and an analytical solution, which requires a sophisticated program but has a short computation time.

These analyses estimate the dispersion of the results caused by random and sampling errors. The deviation from the true value, caused possibly by systematic errors, is not considered.

A decision concerning a field appraisal or development process must account for the economic risk connected to the uncertainty of hydrocarbons in place.

Although most log interpretations only provide the most likely petrophysical parameter values, the reservoir engineer also needs to know the uncertainty for these values. A log interpretation should therefore provide, in addition to the usual results, the uncertainties for these values.8 9

Three different ways of managing uncertainties are:

1. Perform sensitivity tests on each source of uncertainty to determine those with the greatest effect and improve their determination and acquisition.

2. Interpret each option or at least indicate the alternative options, when the results indicate several possibilities.

3. Compute the final uncertainty derived from all known uncertainties of the data so that the reservoir engineer can take these into account in the evaluation. Uncertainties include:

- Uncertainties on the recorded logs. Logging vendor information usually does not permit quantification of these uncertainties. This point may be taken into account or might be negligible compared with the other uncertainties on the logs.

- Environmental corrections. Most input parameters for environmental corrections are rough estimates, and the equations are often approximate, even though the correction factors obtained with equations might be large.

For example, in a 12 1/4-in. borehole diameter, the log analysis must correct the neutron-derived porosity log for an approximate standoff effect, which depends on the exact position of the tool in the well.

If standoff varies between 0.0 and 0.5 in., this correction leads to an uncertainty of about ±1.5 porosity units (pu).

Another example is true formation resistivity (Rt) and flushed-zone resistivity (Rxo) determined from a set of resistivity logs with the assumption of an invasion profile (generally a step profile). The uncertainties of the volumetric log response and the invasion profile approximation cause an uncertainty on saturations and also on the porosity, which depends on Rxo.

- Investigation depths. The different volumes of investigation by each tool will create interpretation uncertainties. Log interpretation provides results based on measurements with different volumes of investigation (vertically and horizontally). These results, therefore, are valid only as an approximation and should include an estimated uncertainty.

- Equations used for the interpretation. The transform between logs and usable petrophysical parameters of logs, especially that of the sonic log and the neutron log, is not known completely. This also applies to saturation equations.

- Interpretation parameters. Different log analysts on the same set of data may provide different interpretations.

Monte Carlo method

The Monte Carlo method involves a large number of interpretations each having different inputs for the log values as well as for the interpretation parameters. These inputs have random values inside a normal distribution centered on the reference values. The most likely interpretation defines the reference values (Fig. 3a).

Fig. 3b shows the interpretation modifying the parameters and in Fig. 3c, after modifying both the parameters and shale volume (Vsh) log. Fig. 4 then provides a computation of sums (porosity × height and hydrocarbon height) and averages (average porosity and water saturation). This leads to a statistics solution with these sums and averages to provide histograms and standard deviations.

The method requires a few lines of programming and a long computation time. The uncertainty is determined for the required intervals, not for each log sample.

This method uses a deterministic interpretation algorithm (n equations, n unknowns).

A log analyst can determine easily the uncertainty on the input parameters; however, the uncertainty on the log values must be estimated from previous experience.

This computation does not include the uncertainties in the log values.

Analytical method

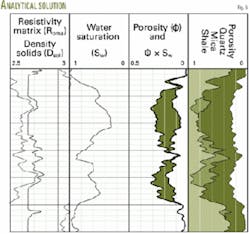

The analytical method includes an optimization interpretation algorithm (n equations, m unknowns, n>m). As in the Monte Carlo method, the set of the most likely values of all interpretation parameters defines the reference solution. A standard interpretation process provides the results shown in Fig. 5.

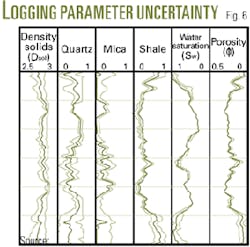

An analytical solution computes a continuous curve of standard deviation for each output component (matrix, clay, and fluids). The porosity, water saturation, and solid density standard deviations are then computed as combinations between some components. Fig. 6 shows the logs of maximum and minimum values derived from these standard deviations.

This method requires a sophisticated program, but once written, the computation of uncertainties is fast and provides a value of uncertainty for each log sample (uncertainties could appear as curves).

For the Monte Carlo method, the log analyst easily estimated the uncertainty on the interpretation input parameters, but the analysis should have computed previously the uncertainty on the log values.

References

1. Thompson, B., and Theys, P., “The importance of quality,” The Log Analysts, Vol. 35, No. 5, 1994, p. 13-14.

2. Head, E.L., et al., “A New technique for log quality control,” Transactions of the European Formation Evaluation Symposium, Vol. 15, 1993.

3. Theys, P., Log quality control and error analysis, prerequisite to accurate formation evaluation, Paper V, 11th European Formation Evaluation Symposium, SPWLA, Oslo, Sept. 1988.

4. Kimminau, U., and Theys, P., “Terminology for log quality assurance,” The Log Analysts, Vol. 32, No. 6, 1991, p. 680.

5. Theys, P., Log data acquisition and quality control, Technip, Paris, 1991.

6. Owens, J., and Cockcroft, P., “Sensitivity analysis of errors in reserve evaluations due to core and log measurement inaccuracies,” P.E. Worthington, Ed., Advances in Core Evaluation Accuracy and Precision in Reserve Estimation, Golden and Breach, 1990, pp. 381-94.

7. Owens, J., “Fit-for-purpose data during field life,” The Log Analysts, Vol. 35, No.5, 1994, pp. 58-60.

8. Ventre, J., “Propagation of uncertainties in log interpretation,” The Log Analysts, Vol. 35, No. 5, 1994, pp. 60-67.

9. Hamada, G.M., and AlAwad, M.N., “Evaluating uncertainty in Archies water saturation equation parameters determination methods,” Paper No. SPE 68083, SPE Middle East Oil Show and Conference, Bahrain, Mar. 17-20, 2001.

10. Schneider, D., et al., “Processing and quality assurance of unevenly sampled nuclear data recorded while drilling,” Paper RR, SPWLA 35th Annual Logging Symposium, Tulsa, June 19-22, 1994.

The author

Gharib M. Hamada ([email protected]) is professor of well logging and applied geophysics at King Fahd University, Saudi Arabia. Previously he was with Cairo University, Technical University of Denmark, Sultan Qaboos University, and King Saud University. His main research interests are well logging technology, formation evaluation, and seismic data analysis. Hamada holds a BS and MS in petroleum engineering from Cairo University, and a DEA and. D’Ing from Bordeaux University, France. He is member of SPE and SPWLA.

Fit-for-purpose data

A successful development plan requires that attention be paid to data coverage (x, y, z), dynamic and static data, data quality, and integration and reconciliation of different measurement scales.

Data coverage (x, y, z) and quantity involve knowing if the data:

- Capture and describe reservoir heterogeneity and flow unit continuity.

- Are from crestal or downdip wells.

- Include aquifer data.

- Include wells with sufficient spacing to ensure that field characteristics distinguish different scales (geologically, petrophysically, and hydraulically) and allow for the construction of meaningful maps.

- Include 3D seismic.

- Solve the problems of geophysicists, reservoir geologist, and reservoir and simulation engineers.

- Indicate the validity of single-field wide values for cementation and saturation exponents.

- Provide sensitivity parameters for estimating reserves.

Dynamic, static data include:

- Acquiring data for reservoir management purposes during the field life to monitor such parameters as pressure depletion, fluid movement, water breakthrough.

- Determining if net cutoffs for one part of a field are relevant to other parts and flow units in the field.

- Verifying if spinner surveys evaluated the net-to-gross pay cutoffs.

- Determining if net-to-gross cutoffs evolved based on production data during field life and their impact on reserves estimates.

- Obtaining formation pressure data and determining if some reservoir zones deplete faster than others and if this was predicted.

- Determining if baseline pulsed-neutron decay logs were obtained.

- Determining if permeability studies yield adequate correlations with well-test results.

Data quality includes:

- Determining the precision and accuracy needed for different parameters.

- Forecasting the accuracy and precision needs (and hence cost) during field life. This may involve more expensive as well as cheaper techniques and data.

- Identifying any problems in early wells subsequently solved by modifying data acquisition programs.

- Evaluating if the field model is cohesive and reasonable.

Integration and reconciliation of different measurement scales require:

- Determining if the value of the money spent for integrating data types and the criteria for determining the value.

- Reconciling the different scales of measurement.

- Determining if the vertical density and resolution of data were considered, such as minipermeameter, core plug, and well tests.

- Integrating flowmeter surveys with data from image logs, conventional openhole logs, core permeability for evaluating such parameters as net-to-gross pay.

- Determining whether disagreements in datasets are a problem of data quality or the quality of its interpretation.